Meta Platforms is building more safeguards to protect teen users from unwanted direct messages on Instagram and Facebook, the social media platform said yesterday.

The move comes weeks after the WhatsApp owner said it would hide more content from teens after regulators pushed the world’s most popular social media network to protect children from harmful content on its apps, a Reuters report stated.

The regulatory scrutiny increased following testimony in the US Senate by a former Meta employee who alleged the company was aware of harassment and other harm facing teens on its platforms but failed to act against them.

Meta said teens will no longer get direct messages from anyone they do not follow or are not connected to on Instagram by default. They will also require parental approval to change certain settings on the app.

On Messenger, accounts of users under 16 and below 18 in some countries will only receive messages from Facebook friends or people they are connected through phone contacts.

Adults over the age of 19 cannot message teens who don’t follow them, Meta added.

MIB extends by 4 weeks ban on news channels’ TRP by BARC India

MIB extends by 4 weeks ban on news channels’ TRP by BARC India  Reliance eyes LEO satellite play to rival Starlink in India: ET report

Reliance eyes LEO satellite play to rival Starlink in India: ET report  FIFA offered $20mn for WC’26 broadcast rights for India market

FIFA offered $20mn for WC’26 broadcast rights for India market  IPL franchise Rajasthan Royals get new owners in Mittals, Poonawalla

IPL franchise Rajasthan Royals get new owners in Mittals, Poonawalla  Netflix leads India’s 2025 theatrical streaming race: Ormax study

Netflix leads India’s 2025 theatrical streaming race: Ormax study  Network18 tops counting day with 2M+ peak YouTube viewers

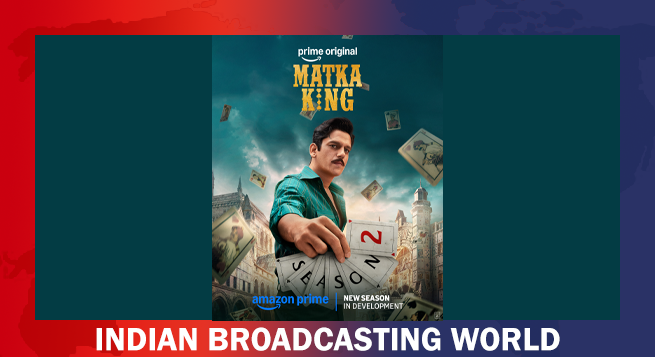

Network18 tops counting day with 2M+ peak YouTube viewers  ‘Matka King’ S2 announced after strong global response on Prime Video

‘Matka King’ S2 announced after strong global response on Prime Video  Prime Video to integrate MX Player into unified streaming platform

Prime Video to integrate MX Player into unified streaming platform  Raghav Raj Kodesia joins Netflix to lead Original Films and Acquisitions

Raghav Raj Kodesia joins Netflix to lead Original Films and Acquisitions  Amagi launches in-content ad marketplace to expand CTV advertising push

Amagi launches in-content ad marketplace to expand CTV advertising push